2021

Marco, A., Baumann, D., Khadiv, M., Hennig, P., Righetti, L., Trimpe, S.

Robot Learning with Crash Constraints

IEEE Robotics and Automation Letters, 6(2):1439-1446, IEEE, February 2021 (article)

2020

Stockinger, G., Janka, H., Kresse, D., Melson, T., Ertl, T., Gabler, M., Gessner, A., Wongwathanarat, A., Tolstov, A., Leung, S., Nomoto, K., Heger, A.

Three-dimensional models of core-collapse supernovae from low-mass progenitors with implications for Crab

Monthly Notices of the Royal Astronomical Society , 496(2):2039-2084, August 2020 (article)

Kanagawa, M., Sriperumbudur, B. K., Fukumizu, K.

Convergence Analysis of Deterministic Kernel-Based Quadrature Rules in Misspecified Settings

Foundations of Computational Mathematics, 20, pages: 155-1944, February 2020 (article)

Kersting, H., Sullivan, T. J., Hennig, P.

Convergence rates of Gaussian ODE filters

Statistics and Computing, 30(6):1791-1816, 2020 (article)

Wahl, N., Hennig, P., Wieser, H., Bangert, M.

Analytical probabilistic modeling of dose-volume histograms

Medical Physics, 47(10):5260-5273, 2020 (article)

2019

Tronarp, F., Kersting, H., Särkkä, S., Hennig, P.

Probabilistic Solutions To Ordinary Differential Equations As Non-Linear Bayesian Filtering: A New Perspective

Statistics and Computing, 29(6):1297-1315, 2019 (article)

Karvonen, T., Kanagawa, M., Särkä, S.

On the positivity and magnitudes of Bayesian quadrature weights

Statistics and Computing, 29, pages: 1317-1333, 2019 (article)

Tronarp, F., Kersting, H., Särkkä, S. H. P.

Probabilistic solutions to ordinary differential equations as nonlinear Bayesian filtering: a new perspective

Statistics and Computing, 29(6):1297-1315, 2019 (article)

Motta, A., Berning, M., Boergens, K. M., Staffler, B., Beining, M., Loomba, S., Hennig, P., Wissler, H., Helmstaedter, M.

Dense connectomic reconstruction in layer 4 of the somatosensory cortex

Science, 366(6469):eaay3134, 2019 (article)

Bartels, S., Cockayne, J., Ipsen, I., Hennig, P.

Probabilistic Linear Solvers: A Unifying View

Statistics and Computing, 29(6):1249-1263, 2019 (article)

2018

Kersting, H., Sullivan, T. J., Hennig, P.

Convergence Rates of Gaussian ODE Filters

arXiv preprint 2018, arXiv:1807.09737 [math.NA], July 2018 (article)

Kanagawa, M., Hennig, P., Sejdinovic, D., Sriperumbudur, B. K.

Gaussian Processes and Kernel Methods: A Review on Connections and Equivalences

Arxiv e-prints, arXiv:1805.08845v1 [stat.ML], 2018 (article)

Muandet, K., Kanagawa, M., Saengkyongam, S., Marukata, S.

Counterfactual Mean Embedding: A Kernel Method for Nonparametric Causal Inference

Arxiv e-prints, arXiv:1805.08845v1 [stat.ML], 2018 (article)

Nishiyama, Y., Kanagawa, M., Gretton, A., Fukumizu, K.

Model-based Kernel Sum Rule: Kernel Bayesian Inference with Probabilistic Models

Arxiv e-prints, arXiv:1409.5178v2 [stat.ML], 2018 (article)

Schober, M., Särkkä, S., Hennig, P.

A probabilistic model for the numerical solution of initial value problems

Statistics and Computing, 29(1):99–122, 2018 (article)

Wahl, N., Hennig, P., Wieser, H., Bangert, M.

Analytical incorporation of fractionation effects in probabilistic treatment planning for intensity-modulated proton therapy

Medical Physics, 45(4):1317-1328, 2018 (article)

2017

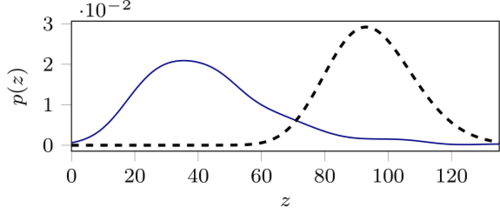

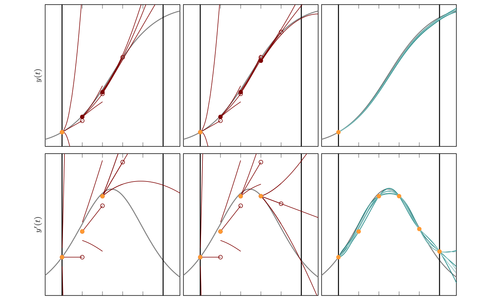

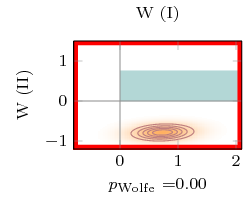

Mahsereci, M., Hennig, P.

Probabilistic Line Searches for Stochastic Optimization

Journal of Machine Learning Research, 18(119):1-59, November 2017 (article)

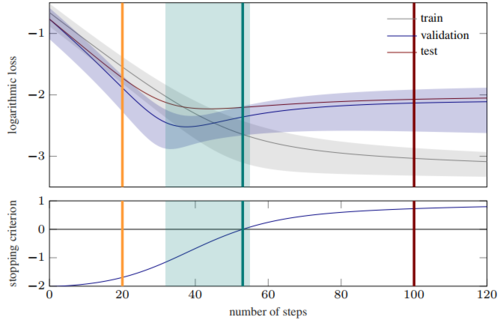

Mahsereci, M., Balles, L., Lassner, C., Hennig, P.

Early Stopping Without a Validation Set

arXiv preprint arXiv:1703.09580, 2017 (article)

Roos, F. D., Hennig, P.

Krylov Subspace Recycling for Fast Iterative Least-Squares in Machine Learning

arXiv preprint arXiv:1706.00241, 2017 (article)

Gretton, A., Hennig, P., Rasmussen, C., Schölkopf, B.

New Directions for Learning with Kernels and Gaussian Processes (Dagstuhl Seminar 16481)

Dagstuhl Reports, 6(11):142-167, 2017 (article)

Wahl, N., Hennig, P., Wieser, H. P., Bangert, M.

Efficiency of analytical and sampling-based uncertainty propagation in intensity-modulated proton therapy

Physics in Medicine & Biology, 62(14):5790-5807, 2017 (article)

Wieser, H., Hennig, P., Wahl, N., Bangert, M.

Analytical probabilistic modeling of RBE-weighted dose for ion therapy

Physics in Medicine and Biology (PMB), 62(23):8959-8982, 2017 (article)

2016

Klenske, E. D., Zeilinger, M., Schölkopf, B., Hennig, P.

Gaussian Process-Based Predictive Control for Periodic Error Correction

IEEE Transactions on Control Systems Technology , 24(1):110-121, 2016 (article)

Klenske, E. D., Hennig, P.

Dual Control for Approximate Bayesian Reinforcement Learning

Journal of Machine Learning Research, 17(127):1-30, 2016 (article)

2015

Hennig, P.

Probabilistic Interpretation of Linear Solvers

SIAM Journal on Optimization, 25(1):234-260, 2015 (article)

Hennig, P., Osborne, M. A., Girolami, M.

Probabilistic numerics and uncertainty in computations

Proceedings of the Royal Society of London A: Mathematical, Physical and Engineering Sciences, 471(2179), 2015 (article)

2013

Hennig, P., Kiefel, M.

Quasi-Newton Methods: A New Direction

Journal of Machine Learning Research, 14(1):843-865, March 2013 (article)

Bangert, M., Hennig, P., Oelfke, U.

Analytical probabilistic modeling for radiation therapy treatment planning

Physics in Medicine and Biology, 58(16):5401-5419, 2013 (article)

2012

Hennig, P., Schuler, C.

Entropy Search for Information-Efficient Global Optimization

Journal of Machine Learning Research, 13, pages: 1809-1837, -, June 2012 (article)